Redundancy Mitigation: Towards Accurate and Efficient Image-Text Retrieval

Redundancy Mitigation: Towards Accurate and Efficient Image-Text Retrieval

The extraction password for each file is the submission ID plus the second word from the introduction section.

Example: If submission ID is TXXXX-12345-2025 and the second word in introduction is "image" (e.g., "The image analysis..."), then the password is "TXXXX-12345-2025-image".

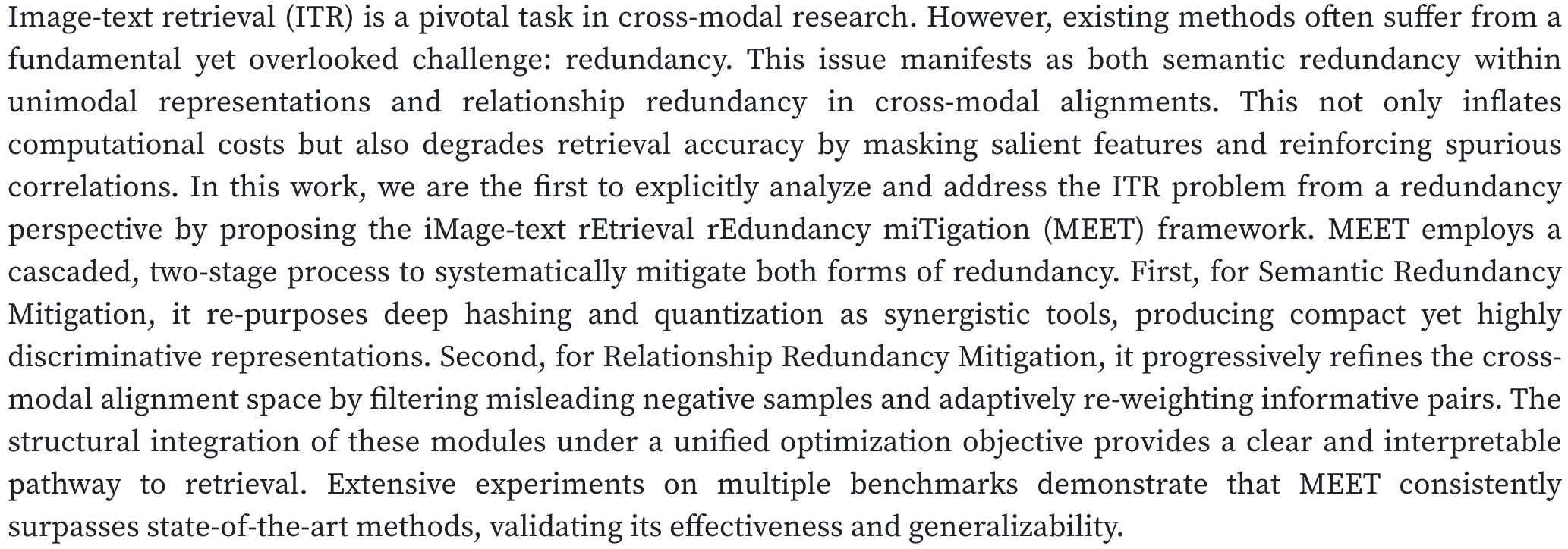

Abstract

TL;DR

Redundancy is the bottleneck: Unimodal semantic redundancy (uninformative/common features) and cross-modal relationship redundancy (spurious co-occurrences) jointly inflate compute and degrade retrieval accuracy.

Limits of prior approaches: Pure embedding optimization or heavy re-ranking often amplifies redundancy or sacrifices efficiency under large-scale retrieval.

Opportunity: If we first purify representations (mitigate semantic redundancy) and then refine alignments (mitigate relationship redundancy) under a unified objective, we can improve both accuracy and efficiency.

Semantic Redundancy

Generic phrases or cluttered visual regions mask salient semantics, hindering compact, discriminative representations.

Relationship Redundancy

Spurious cross-modal co-occurrences (e.g., common but irrelevant pairs) inflate similarity for incorrect matches.

Accuracy–Efficiency Tension

Fine-grained attention and re-ranking can help accuracy but are computationally heavy without early filtering.

"Redundancy Mitigation First, Alignment Refinement Second"

Explicitly view image–text retrieval (ITR) through a redundancy lens: purify semantics first, then refine cross-modal relationships under a unified objective.

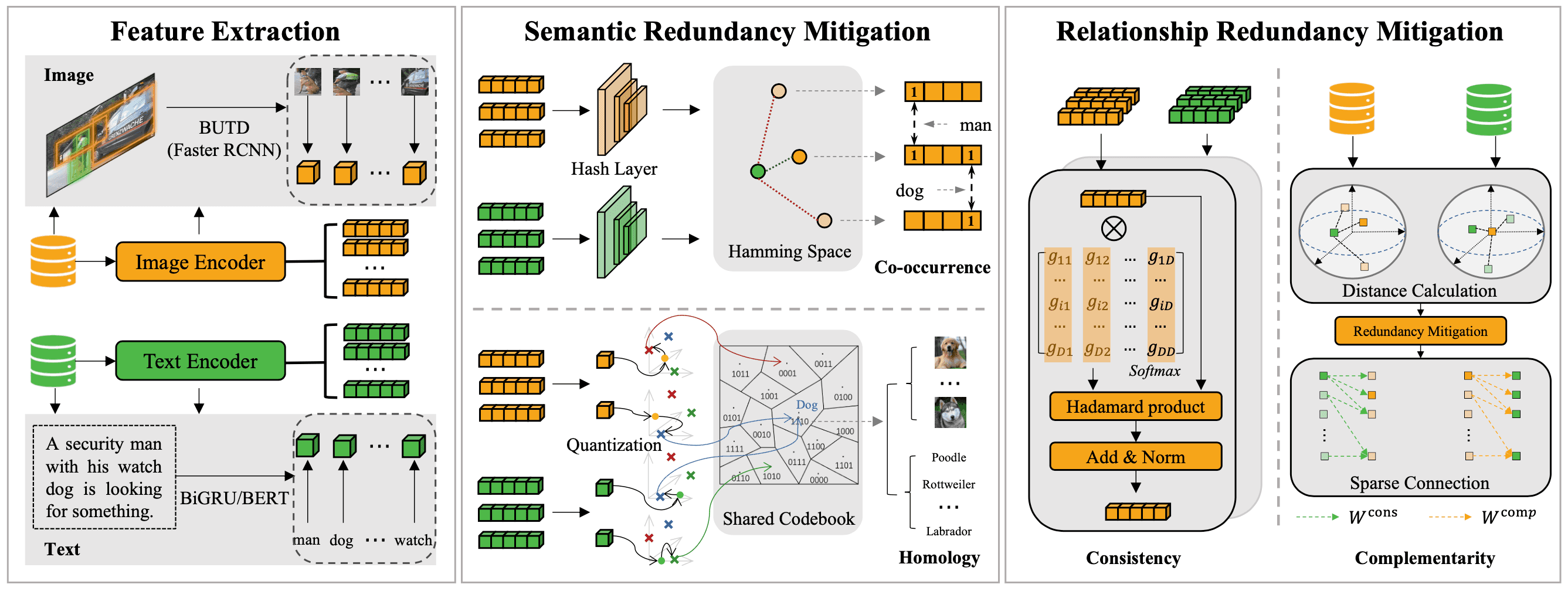

Stage I — Semantic Redundancy Mitigation

- Deep Hashing: learn compact codes; fast Hamming search prunes a large portion of candidates.

- Deep Quantization: shared codebooks + ADC for refined similarity; forms a stronger initial candidate set.

- Dual Filter: hash filter (α) → quantization filter (β) to obtain Tinit.

Stage II — Relationship Redundancy Mitigation

Consistency Modeling: external-attention weighting between image and candidate texts to capture global semantic association.

Complementarity Modeling: re-ranking via how each text ranks the query image (top-z) to inject complementary signals; combine with consistency for final scores.

Unified Optimization

Feature-space discrepancy loss (Lf) + Encoding loss (Lq) with weights β1, β2 stabilize hash/quant spaces while preserving original similarities.

Accuracy & Efficiency

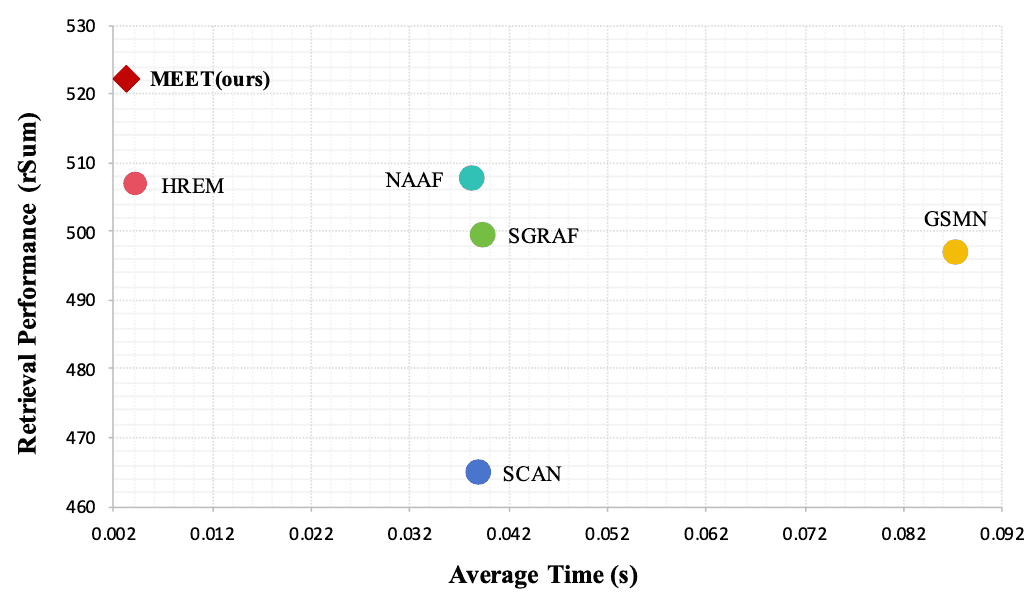

Dual filtering reduces compute while boosting top-rank accuracy; MEET achieves higher rSum with competitive time cost versus strong baselines.

Benchmark Gains

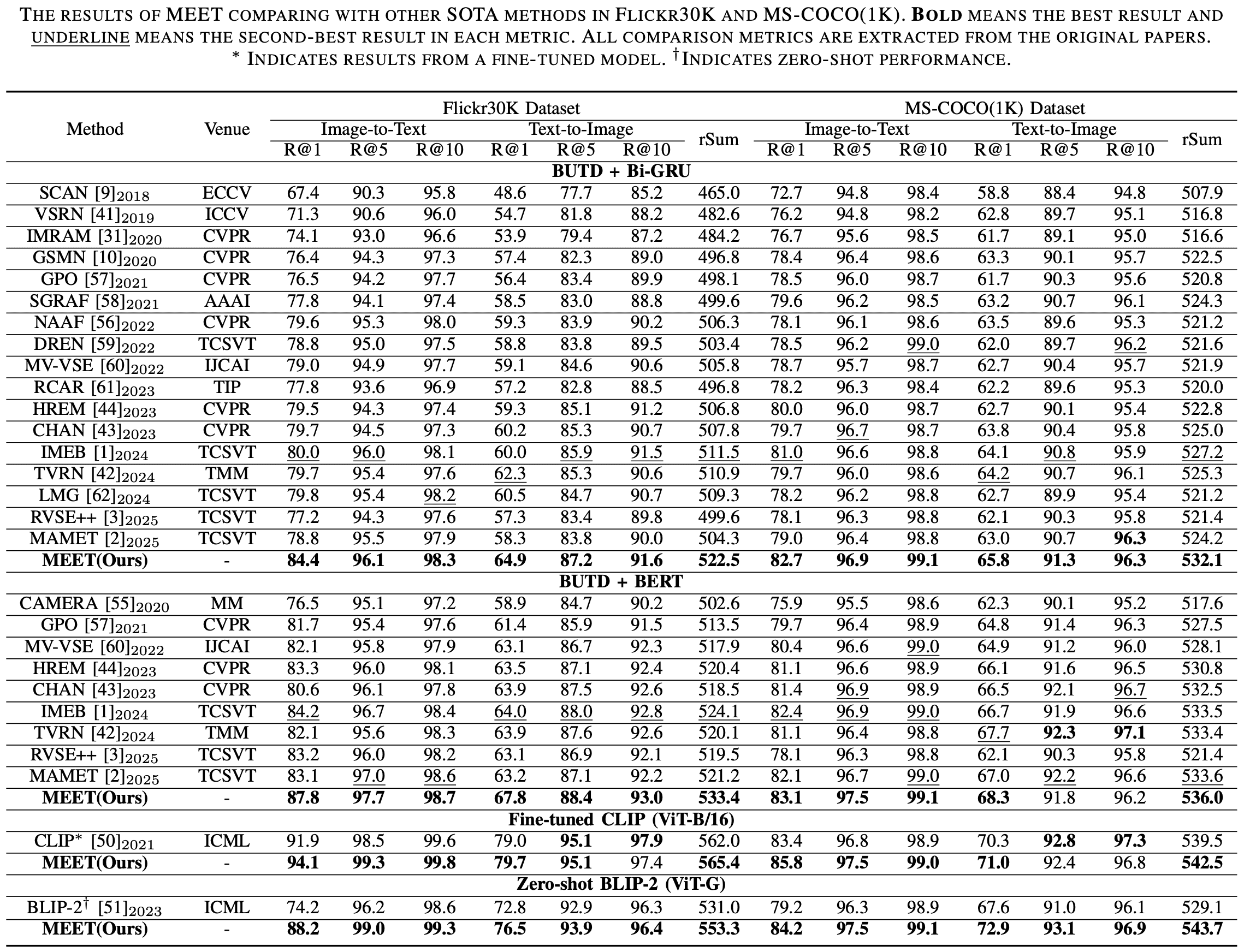

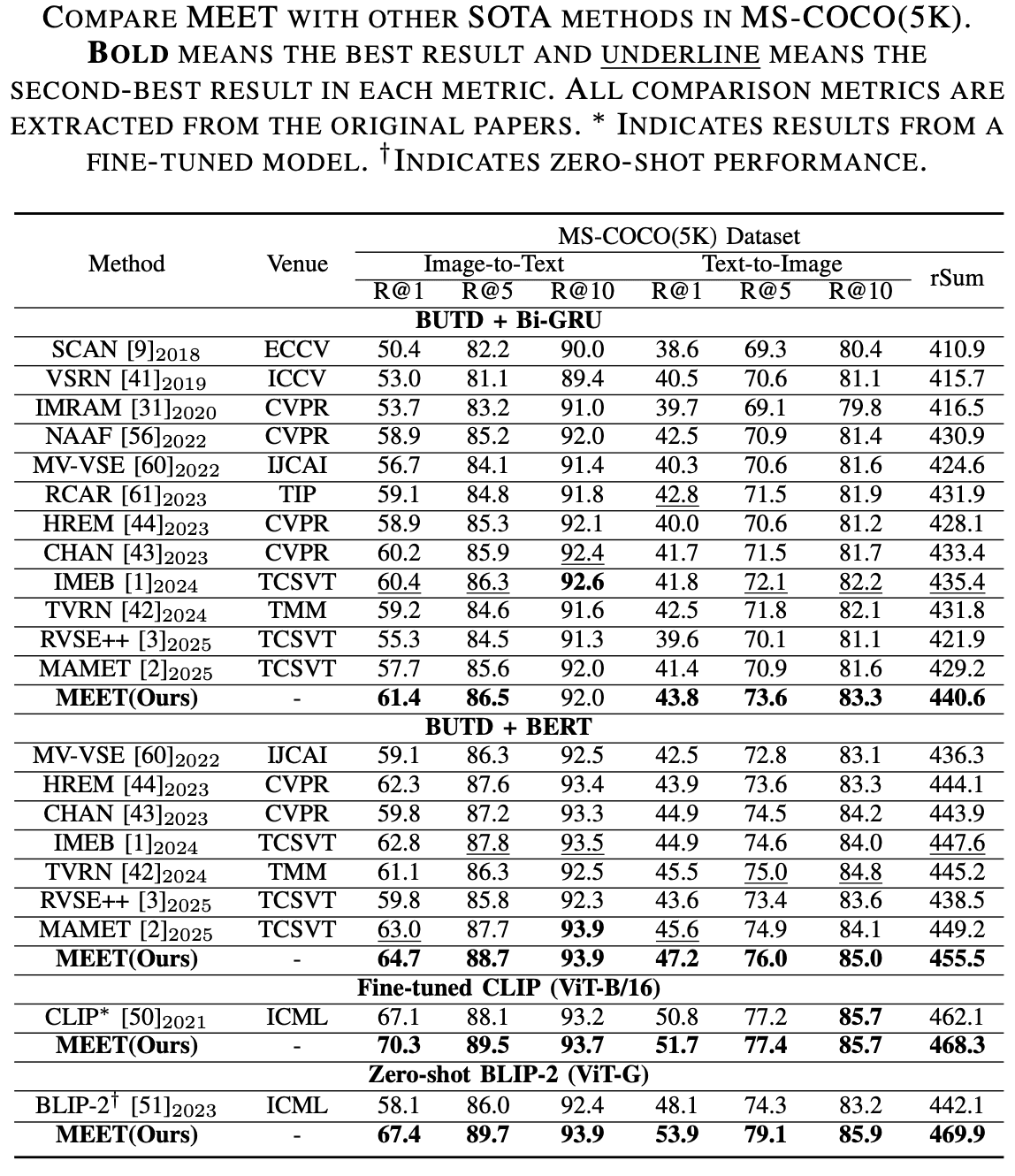

On Flickr30K and MS-COCO, MEET consistently surpasses prior SOTAs in rSum (with strong gains on R@1 for both I2T and T2I).

Robust Design

Ablations show both Stage I/II and both losses (Lf, Lq) are essential; performance remains stable across β1/β2, α/β, and z settings.

Pipeline

Results

BibTeX

@article{wang2025meet,

title={Redundancy Mitigation: Towards Accurate and Efficient Image-Text Retrieval},

author={Wang, Kun and Hu, Yupeng and Liu, Hao and Jie, Lirong and Nie, Liqiang},

journal={IEEE Transactions on Circuits and Systems for Video Technology},

year={2025}

}